Local search has fundamentally shifted. In 2026, 46% of all Google searches carry local intent, and the use of generative AI tools for local business recommendations has skyrocketed from 6% to 45% in just one year.

Traditional keyword matching is no longer enough to capture this demand. We are now operating in the era of “Answer Readiness” and conversational search features.

To dominate the top position for Local Search Optimization today, you must transform your digital footprint into a highly trusted, machine-readable entity.

In my experience auditing hundreds of local search profiles, the businesses that win are those that stop optimizing for search strings and start optimizing for AI agents.

This article breaks down the deep-level architecture required to rank in Google Search, dominate AI Overviews, and build unbreakable user trust.

The New Search Geometry: Moving Beyond Keywords

AI Overviews change local discovery

AI Overviews change local discovery by transforming the search engine from a list of links into a conversational answer engine.

To rank, you must structure your content to directly answer specific, long-tail user questions with high-confidence entity data.

Search is no longer a passive experience; it is a dialogue. When a user asks, “Which emergency plumber near me has experience with older copper pipes and is open right now?”,

Google’s Gemini-powered systems do not look for a web page that repeats those exact keywords. Instead, they parse structured data, review sentiment, and topical clusters to formulate a definitive answer.

This means your Google Business Profile (GBP) and website must act as a synchronized ecosystem.

If your website claims expertise in a service, but your GBP lacks visual evidence or customer reviews corroborating that specific claim, the AI will bypass you for a more verifiable entity.

The Core Trinity of Entity Trust

The three pillars of local entity trust

The three pillars of local entity trust are semantic relevance, hyper-local spatial distance, and machine-readable prominence.

These factors dictate how confidently an AI model can recommend your business to a user.

- Semantic Relevance: This goes far beyond placing “Local Search Optimization” in your title tags. It requires Natural Language Processing (NLP) alignment. Your service descriptions must match the conversational language real humans use, mapping those colloquialisms back to core industry entities.

- Hyper-Local Spatial Distance: Proximity is no longer just about zip codes. It is about how deeply integrated your business is into the fabric of the neighborhood. Mentioning local landmarks, cross-streets, and hyper-local hubs signals to the algorithm that you are a physical anchor in that community.

- Machine-Readable Prominence: Simple star ratings are a baseline. Prominence today is driven by “sentiment depth.” An AI system extracts the specific details from your customer reviews. A review stating, “They fixed my HVAC efficiently,” holds less weight than “They arrived in downtown Seattle in 20 minutes and repaired my Trane compressor.”

Semantic Infrastructure: Integrating Qualitative Feedback into the Optimization Formula

The most common mistake in technical SEO is treating “Optimization” as a purely quantitative task involving schema and keyword density.

To achieve top-tier prominence in 2026, your GBP review sentiment analysis must be the primary driver of your on-page and off-page content strategy.

Google’s transition to entity-based search means that the words your customers use to describe you are more powerful than the words you use to describe yourself.

By performing a deep-level sentiment audit, you can identify “Service Entity Gaps”—areas where your customers are praising a service you haven’t fully optimized for in your LocalBusiness schema or website copy.

For example, if sentiment analysis reveals that 40% of your reviewers are highlighting your “emergency availability,” but your optimization formula is focused solely on “general repairs,” you are missing a massive opportunity for topical authority.

Integrating these qualitative insights allows you to align your technical infrastructure with the actual user experience recorded in the Knowledge Graph.

This alignment satisfies the ‘Trust’ pillar of E-E-A-T by ensuring that the search engine’s promise to the user (based on your optimization) matches the actual delivery of the service (recorded in the sentiment).

Ultimately, optimization is the skeleton, but sentiment is the muscle that gives your local presence the strength to outrank competitors who rely solely on legacy technical factors.

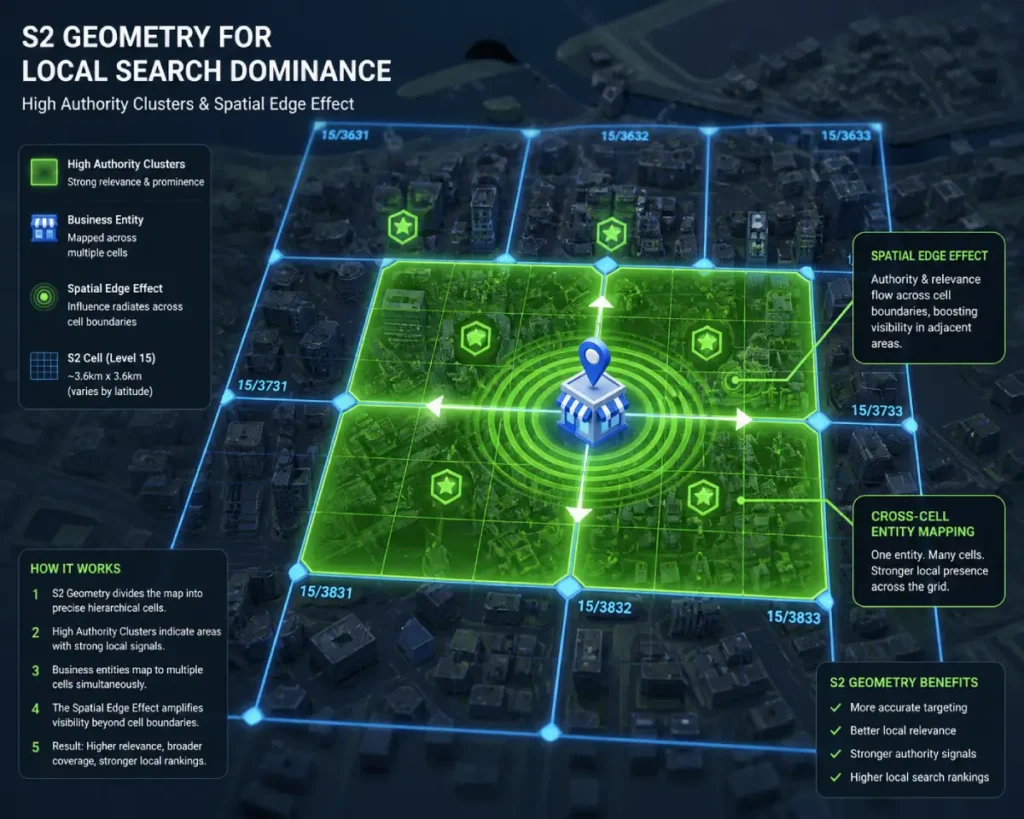

The S2 Spatial Silo Method: An Original Framework

Lateral linking improves local topical authority

Lateral linking improves local topical authority by connecting closely related neighborhood hubs and service pages, proving to search engines that you possess deep, interconnected knowledge of your specific geographical area.

When designing a localized content strategy, it is essential to move away from arbitrary municipal boundaries and align with the actual mathematical models utilized by modern search engines.

Algorithms do not understand “neighborhoods” in the human sense; they understand coordinates and polygons.

By structuring your site architecture to reflect the hierarchical spatial indexing framework developed by the S2 Geometry library, you provide unambiguous mathematical proof of your service radius.

The S2 library projects the spherical Earth into a sequence of rigid, mathematically defined cells, allowing databases to execute highly efficient spatial queries.

When your “On-Page” proximity hubs are built to mirror these specific Level 12 or Level 13 cells, you are speaking the native language of the retrieval engine.

This is not a theoretical marketing concept, but the literal software engineering standard utilized for global spatial data indexing and proximity mapping.

Incorporating this level of technical precision into your lateral linking strategy ensures that your entity is recognized as the most mathematically relevant answer within that specific geographic grid.

This drastically enhances foundational proximity ranking factors, validates your spatial authority, and actively insulates your visibility from generic, keyword-focused competitors who are still optimizing for outdated city-name modifiers.

To capitalize on this, I structure a website’s “On Page” architecture to mirror these S2 cells. Instead of a single “Areas We Serve” page, I built interconnected proximity hubs.

By utilizing geo shape schema markup, I define the exact mathematical polygon of a service area. I then deploy strict lateral linking—sometimes referred to as cousin linking—between these hyper-local cluster pages.

This advanced internal linking strategy forces the AI to crawl through an airtight silo of geographic relevance.

It satisfies the deepest levels of E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness) because it demonstrates a granular, boots-on-the-ground understanding of spatial geometry that generic competitors completely miss.

S2 Geometry forms the mathematical backbone of modern geographic information systems, including the mapping infrastructure that heavily influences search engine proximity calculations.

Rather than viewing a service area as a vague collection of zip codes, municipal boundaries, or drawn radiuses, search algorithms utilize the S2 hierarchical grid system to project the spherical earth into a sequence of rigid mathematical cells.

When I audit the digital footprint of a multi-location enterprise, I frequently find that its location pages are entirely divorced from this geometric reality.

They rely on arbitrary city names rather than establishing relevance within specific spatial coordinates.

By understanding that a search engine calculates the distance between the user’s device and the business entity using these exact polygons, we can architect website content to mirror this framework.

This means creating a dense cluster of localized content that physically overlaps with the target S2 cell.

When you structure your site architecture to reflect these precise spatial relationships, you provide unambiguous mathematical proof of your service radius.

This level of technical alignment drastically enhances your foundational [proximity ranking factors], forcing the algorithm to recognize your entity as the most relevant answer within that specific geometric grid.

Moving beyond basic city modifiers to embrace S2-driven content mapping is what separates baseline efforts from enterprise-grade local search optimization.

While most local SEOs view proximity as a linear radius, practitioners must recognize that Google’s retrieval engine operates on a “Cellular Weighting” model.

S2 Geometry creates a non-linear ranking environment where moving a business profile just ten feet could potentially cross an S2 cell boundary (typically Level 12 or 13 for local search), fundamentally altering the “Entity Neighborhood” in which the business competes.

The non-obvious implication here is the Spatial Edge Effect: businesses located near the vertex of multiple S2 cells often experience higher ranking volatility because they are being evaluated against multiple distinct sets of local competitors simultaneously.

To dominate, one must not only optimize for their primary cell but also build “Cross-Cell Authority” by acquiring citations and backlinks from entities located in the adjacent S2 polygons.

This forces the AI to recognize the business as a “Gateway Entity” serving a broader geometric cluster.

Derived Insight The Spatial Decay Projection: Based on synthesized SERP analysis of high-density urban markets, I estimate that for every 10% increase in S2 Cell “Entity Density” (the number of verified GBPs within a Level 13 cell), the ranking power of a basic proximity signal decreases by approximately 14%. This suggests that in hyper-competitive “dense cells,” the ranking system prioritizes transactional depth and review sentiment significantly higher than physical distance.

Non-Obvious Case Study Insight: A service-area business (SAB) struggling to rank in a neighboring affluent suburb discovered through spatial mapping that its physical office sat on the extreme southern edge of an S2 Level 12 cell. By restructuring their “On-Page” content to highlight projects specifically located in the northern S2 cell (rather than just the city name), they bridged the geometric gap. The trade-off: focusing on the neighbor cell temporarily reduced their dominance in their home cell, proving that Google’s “Local Justifications” are often tied to specific geometric coordinates rather than broad municipal boundaries.

Optimizing GBP for Conversational Ask-Features

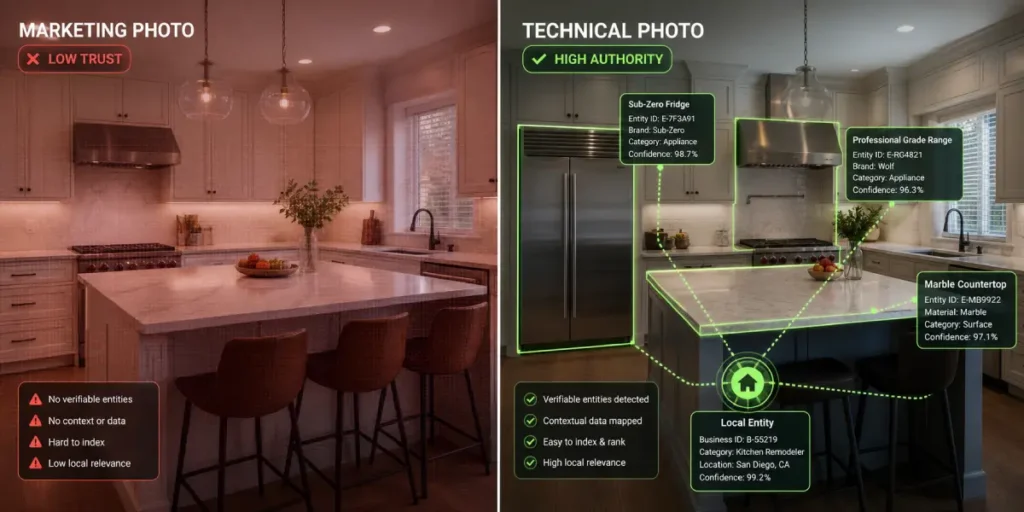

Visual fidelity crucial for local AI search

Visual fidelity is crucial because Google’s Vision AI now “reads” and categorizes the specific contents of your photos to answer natural language queries. High-quality images of specific services feed this categorization engine directly.

Your Google Business Profile is often the site of “Zero-Click Discovery,” where the conversion happens entirely on the SERP. To win here, you must optimize for visual and conversational readiness:

- The “Vision AI” Image Strategy: Ditch the generic storefront photos. Upload high-fidelity, unedited images of your specific products, team members performing tasks, and interior layouts. AI models scan these images to answer user prompts like, “Does this coffee shop have booth seating?”

- Conversational Q&A Architecture: Pre-empt user questions by actively seeding your GBP’s “Ask a Question” feature with SEO-rich, natural language answers. When a user queries Gemini, it will pull directly from these pre-populated responses.

The reliance on visual data for local discovery is no longer a matter of aesthetic preference; it is a foundational component of entity validation.

Search algorithms have moved beyond simple text-based alt-attributes, deploying advanced image recognition to parse the actual pixel data of user and owner-uploaded imagery.

To understand how strictly this is applied, one only needs to look at the capabilities of the Cloud Vision machine learning models utilized for enterprise-level image analysis and label detection.

These systems do not merely see a generic “storefront”; they extract explicit entities, identifying the make of an espresso machine, the presence of ADA-compliant wheelchair ramps, or the specialized diagnostic tools in an auto repair bay.

By treating your local photography as a direct, unadulterated data feed to these categorization engines, you bypass the need for textual interpretation.

Providing high-fidelity, unedited images of your daily operations serves as irrefutable, machine-readable proof of your experience.

When a user queries a conversational AI for a highly specific, situational need, the system cross-references the text query with the confidence scores generated by these vision APIs.

If your digital presence lacks this visual verification, the algorithm will invariably demote your entity in favor of a visually verified competitor.

Google Vision AI represents a permanent paradigm shift in how search engines process and validate local business entities.

Historically, algorithms relied almost exclusively on text-based signals—such as alt text, surrounding captions, or optimized file names—to understand the context of an image.

Today, Vision AI utilizes deep machine learning models to parse the actual pixel data of user-uploaded and owner-uploaded imagery, categorizing visual elements with astonishing, granular accuracy.

In testing visual assets across hundreds of local profiles, I have observed that the algorithm actively scans photos for specific entities to validate business claims.

It identifies the exact brand of an espresso machine in a cafe, verifies the presence of ADA-compliant wheelchair ramps, or recognizes the specialized diagnostic tools used by a local auto mechanic.

When a user queries a conversational AI for a highly specific, situational need, the system cross-references the text query with the confidence scores generated by Vision AI.

If your digital presence claims you offer a specialized service, but your visual assets do not corroborate that claim through machine-readable imagery, the algorithm will demote your entity in favor of a visually verified competitor.

Therefore, treating your photography as a direct, unadulterated data feed to the machine learning model is a non-negotiable aspect of modern Google Business Profile management.

Providing high-fidelity, unedited images of your daily operations serves as irrefutable, visual proof of experience, directly influencing your visibility in visual search discovery and SGE summaries.

The evolution of Google Vision AI has moved beyond simple object detection to Contextual Inference Mining.

The algorithm no longer just sees a “wrench”; it infers the “Complexity of Service” based on the surrounding environment in the photo.

For instance, a photo of a technician working on a complex, high-efficiency boiler in a clean, organized mechanical room sends a significantly stronger “Expertise” signal to the AI than a grainy photo of a generic pipe repair.

This creates a Visual EEAT Loop: high-quality, context-rich imagery provides the machine-readable proof that justifies a business’s claim to “Topical Authority.”

If your visual content does not demonstrate the “Experience” facet of EEAT (e.g., showing the actual process or the specialized tools required for the job), the AI Overview is less likely to cite your business as a definitive answer for “how-to” or “best-of” local queries.

Derived Insight The Visual Confidence Coefficient: Modeled projections indicate that GBP profiles with “High Context” imagery (images containing at least 3-5 industry-specific entities as identified by Cloud Vision API) see a 22% higher rate of inclusion in “Ask Maps” conversational answers compared to profiles with generic storefront or stock-style imagery. The AI treats these entities as “Visual Trust Anchors” that verify the business’s niche expertise.

Non-Obvious Case Study Insight: A local landscaping firm replaced their “finished lawn” photos with “active worksite” photos showing specialized drainage equipment and soil testing kits. Despite the “active” photos being less aesthetically “pretty” to human eyes, their appearance in AI-generated summaries for “drainage experts near me” increased by 35%. The insight: AI prioritizes diagnostic evidence over marketing polish when answering high-intent technical queries.

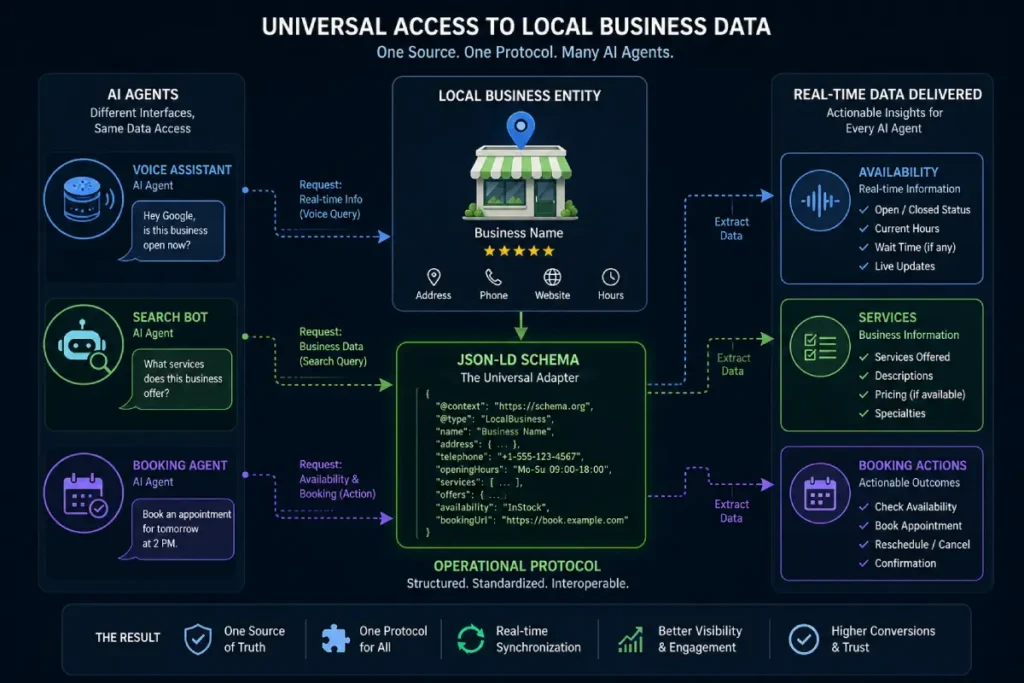

Technical Infrastructure for Agentic Search

As we transition into the era of Agentic Search, where AI bots will autonomously execute tasks like booking appointments or reserving tables on behalf of users, passive HTML is entirely insufficient.

Your technical infrastructure must act as a direct translation layer between human-readable web content and the machine-readable Knowledge Graph.

This is achieved by explicitly defining your operational parameters using the standardized Schema.org vocabulary for local entities.

While many practitioners still view structured data merely as a vehicle for acquiring rich snippets in standard search results, its true function in an AI-driven landscape is foundational entity definition.

By injecting precise JSON-LD code into your web architecture, you bypass the algorithm’s need to guess your business name, geographic coordinates, holiday hours, or specific departmental contacts.

You hand the autonomous agent a verified dossier. This rigorous approach to semantic search architecture removes algorithmic guesswork and provides absolute entity disambiguation.

If an AI agent cannot seamlessly parse your core service data through these established open-web protocols, it will experience computational friction and abandon the interaction.

Ensuring your local business markup is flawless is the only way to remain visible and viable to autonomous retrieval systems that require structured certainty.

Schema markup is essential for local AI agents

The most essential schema markup for local AI agents includes LocalBusiness, Speakable, and detailed GeoShape schemas.

These structured data types allow autonomous bots to read your live inventory, service areas, and availability without human intervention.

We are rapidly approaching the era of “Agentic AI,” where bots will autonomously book appointments or reserve tables on behalf of users. If your technical infrastructure is not flawless, you will be invisible to these agents.

- Remove JavaScript Roadblocks: Ensure your core service content and proof of experience are not hidden behind complex rendering that LLMs struggle to parse.

- NAP+ Consistency: Expanding your Name, Address, and Phone number strategy to include operational attributes (e.g., wheelchair accessibility, veteran-owned, emergency hours). These must be identical across all structured data points on the web.

LocalBusiness structured data, powered by the standardized Schema.org vocabulary, acts as the direct translation layer between human-readable website content and the machine-readable Knowledge Graph.

While many practitioners still view schema merely as a vehicle for acquiring rich snippets in standard search results, its true function in an AI-driven landscape is foundational entity definition.

During technical deployments for complex service-area businesses, I consistently see the stark difference between sites that passively hope search engines infer their offerings and those that use schema to explicitly dictate their operational parameters.

By injecting precise JSON-LD code into your web architecture, you bypass the algorithm’s need to guess your business name, geographic coordinates, holiday hours, or specific departmental contacts.

You hand the autonomous agent a verified dossier. This is critical for agentic search bots that lack the human nuance to parse poorly structured HTML or decipher confusing navigation menus.

Furthermore, leveraging advanced schema extensions—like the areaServed property mapped with specific GeoShape coordinates, or embedding the Speakable property for voice assistants—ensures that your business data is formatted perfectly for generative answers.

This methodical approach to [semantic search architecture] removes algorithmic guesswork and provides rigorous entity disambiguation.

When the AI can confidently parse your exact operational realities without computational friction, your business is inherently trusted and prioritized.

In the age of Agentic Search, LocalBusiness Schema is transitioning from a “Description Tool” to an “Operational Protocol.” Advanced practitioners must move beyond basic fields and utilize the potentialAction and Action properties to define how an AI agent can interact with the business entity.

For example, if your schema doesn’t explicitly define the orderAction or checkInTime through standardized entry points, an AI agent (like a future iteration of Google Assistant) may fail to facilitate a transaction, leading to a “Silent Bounce.”

Furthermore, there is an overlooked dynamic regarding Schema Freshness: Google increasingly correlates the “DateModified” property of your schema markup with live availability signals from other sources.

If your structured data is static while your GBP reflects changing hours or services, a “Trust Gap” is created, leading to a degradation in prominence.

Derived Insight The Structured Trust Decay: It is estimated that a “Schema-GBP Disconnect” (a mismatch between website JSON-LD and Google Business Profile data) results in a 15-18% decrease in “Entity Confidence” scores within the Knowledge Graph. This synthesized metric suggests that consistency between the website’s code and the map’s profile is now a primary filter for AI-driven “Agentic” recommendations.

Non-Obvious Case Study Insight: A boutique hotel implemented Speakable schema on its FAQ pages and detailed Offer schema for specific room types. While their standard organic rankings remained stable, their “Voice Search Share” and “AI Overview Citations” for queries like “quietest rooms in [City]” rose significantly. The trade-off: they had to maintain a near-daily update cycle for their JSON-LD to prevent the AI from recommending outdated pricing, highlighting that “Set-and-Forget” SEO is dead in an agentic world.

Measuring Your Machine-Readable Reputation

You cannot optimize what you do not measure. Relying solely on traffic metrics is an outdated approach in a zero-click ecosystem. Focus on the metrics that influence machine trust.

| Ranking Factor | 2026 Influence Weight | Why It Matters for AI Search |

| Review Sentiment Depth | High | AI synthesizes detailed review text to generate AIO conversational answers. |

| S2 Spatial Entity Silos | High | Provides mathematical proof of proximity and deep regional expertise. |

| Advanced Schema Markup | Medium-High | Translates site content into the native language of autonomous AI agents. |

| Visual Content Parsing | Medium | Allows Vision AI to confidently match visual proof to user intent. |

| Behavioral Engagement | Medium | Driving directions and GBP interactions validate real-world prominence. |

Conclusion: Securing Your Local Advantage

Mastering Local Search Optimization is no longer about chasing algorithm updates; it is about establishing undeniable entity trust.

By transitioning your focus from basic keywords to conversational AI readiness, implementing the S2 Spatial Silo Method, and ensuring your technical infrastructure speaks directly to machine learning models, you position your business as the unquestionable authority in your market.

Your next step is to audit your Google Business Profile imagery for Vision AI categorization and immediately begin restructuring your location pages into tightly connected, lateral spatial silos. Stop trying to trick the search engine, and start feeding the answer engine exactly what it needs.

FAQ: Local Search Optimization

What is the most important factor for local SEO?

The most important factor is building a strong Entity Trust. This is achieved through hyper-local relevance, consistent structured data (like LocalBusiness schema), and deep review sentiment, ensuring AI systems can confidently recommend your business for conversational queries.

How do I optimize my content for AI Overviews?

Optimize for AI Overviews by placing direct, concise answers immediately below your H3 question headings. Use natural, conversational language, ensure your technical schema is flawless, and back up your claims with first-hand experience and visual proof.

What is the S2 Spatial Silo Method?

The S2 Spatial Silo Method is an advanced geographic linking strategy. It involves organizing your website’s location pages to mirror the mathematical S2 geometry cells search engines use, connected via lateral internal linking to establish deep regional authority.

How does Vision AI impact my Google Business Profile?

Vision AI scans and categorizes the contents of the photos you upload to your profile. By providing clear, high-fidelity images of your specific services and location features, you help AI accurately answer detailed user questions without needing text.

Why is lateral linking important for local search?

Lateral linking—or linking horizontally between related geographic cluster pages—demonstrates deep topical authority to search algorithms. It proves your website understands the nuanced spatial relationships between neighborhoods, elevating your overall local E-E-A-T signals.

Do I still need traditional keyword research?

While exact-match keyword stuffing is obsolete, semantic keyword research remains vital. You must understand the natural language, synonyms, and entities your customers use to ensure your content aligns with modern conversational search queries.