Editorial Note: This article analyzes publicly available SEO volatility data, industry reports, and community case studies. Google has not officially confirmed a March 2026 core update.

March 2026 Google Ranking Volatility is creating one of the most unstable search environments seen in recent years. SEO professionals across multiple industries are reporting dramatic ranking fluctuations, unexpected traffic losses, and surprising recoveries in Google Search results.

While Google has not officially confirmed a “March 2026 Core Update,” multiple volatility trackers and community reports show sustained turbulence across search results following the completion of the February 2026 Discover update.

Analysis of 200+ publicly shared recovery reports from SEO communities, industry publications, and volatility monitoring tools reveals a consistent pattern:

Sites that strengthened Experience, Expertise, Authoritativeness, and Trustworthiness (E-E-A-T) signals frequently stabilized or recovered traffic faster than sites relying on thin or purely AI-generated content.

This article distills the most common recovery patterns, technical fixes, and content improvements reported across the SEO industry.

The March 2026 SERP Volatility Landscape

Understanding search volatility requires more than reacting to ranking fluctuations; it requires a structural framework for interpreting how algorithm adjustments interact with content quality signals.

One of the most useful mental models for this is the V.A.L.I.D. algorithm resilience framework, which emphasizes verification, accessibility, logical structure, intent alignment, and information depth as the five pillars of sustainable SEO architecture.

The reason this framework matters in the context of algorithm volatility is that Google rarely penalizes websites arbitrarily. Instead, algorithm recalibrations expose weaknesses that already exist in a site’s content architecture.

For example, a site that demonstrates strong topical expertise but weak information differentiation may perform well temporarily but lose visibility when Google’s systems begin prioritizing information gain over paraphrased content.

From a practitioner perspective, the most resilient sites tend to combine several reinforcing signals simultaneously: clear authorship transparency, structured semantic markup, and content that provides insights unavailable elsewhere on the web.

In many enterprise audits, I have observed that pages introducing a unique analytical model or proprietary framework tend to attract significantly more citations from industry publications.

Those citations reinforce authority signals that stabilize rankings even during algorithm turbulence.

A deeper exploration of this strategic architecture can be found in the guide on Google algorithm update strategies and the V.A.L.I.D. framework, which explains how to engineer content that remains resilient even as Google’s ranking systems evolve.

SEMrush Sensor is widely regarded as one of the most practical real-time indicators of large-scale ranking turbulence across Google’s search ecosystem.

In day-to-day SEO practice, it functions as an early warning system that aggregates ranking fluctuations across thousands of tracked keywords and industries.

When Sensor volatility scores spike significantly above baseline levels, it often signals that Google’s ranking systems are recalibrating relevance signals across multiple verticals.

From an operational perspective, experienced SEO practitioners rarely interpret Sensor spikes as proof of a single algorithm update.

Instead, we treat these spikes as indicators of systemic adjustments within Google’s ranking infrastructure.

When the volatility index climbs into the “very high” range, it typically means large segments of the index are being re-evaluated simultaneously.

During the early March 2026 turbulence discussed in the article, many analysts observed sustained volatility levels that rarely appear outside of confirmed update windows.

A critical advantage of Sensor is its ability to segment volatility by industry category.

This helps identify whether ranking instability is affecting specific niches—such as SaaS, health, or finance—or whether the disruption appears across the broader search landscape.

In practice, this segmentation allows SEO teams to compare their own analytics data with external signals before making reactive changes.

Professionals analyzing Google ranking volatility patterns often cross-reference Sensor readings with Search Console impression changes to determine whether fluctuations are systemic or site-specific.

When combined with technical diagnostics and content audits, Sensor becomes part of a broader framework for algorithm update impact analysis, allowing publishers to distinguish temporary turbulence from deeper structural ranking shifts.

SISTRIX has long been considered one of the most reliable tools for evaluating search visibility trends across large datasets.

Unlike many rank-tracking platforms that focus on individual keyword positions, SISTRIX emphasizes a broader metric known as the Visibility Index.

This metric estimates how frequently a domain appears across a representative set of search queries, providing a more stable view of long-term search performance.

For SEO professionals analyzing algorithm turbulence, the Visibility Index offers an important perspective that complements daily volatility tools.

While platforms like Sensor highlight immediate ranking fluctuations, SISTRIX allows analysts to track whether those fluctuations translate into sustained visibility gains or losses over time.

In practice, this distinction matters because short-term ranking spikes often stabilize within days, while structural algorithm adjustments typically produce clear shifts in visibility curves.

During periods of search instability, experienced practitioners monitor historical visibility graphs to determine whether a site’s traffic changes align with broader ecosystem trends.

If a domain’s visibility declines while competitors rise, the issue may relate to content quality or authority signals rather than algorithm turbulence alone.

Conversely, if multiple domains across a niche show simultaneous visibility changes, it often indicates that Google is recalibrating how it evaluates that topic category.

Within the context of search volatility analysis frameworks, SISTRIX provides valuable longitudinal data that helps separate temporary ranking noise from systemic ranking recalibrations.

Many industry analysts also rely on the platform to identify sector-specific disruptions during major updates.

When combined with other monitoring tools, the Visibility Index becomes a foundational component of SERP trend analysis, enabling publishers to interpret volatility signals through a broader strategic lens.

MozCast is one of the earliest and most recognizable indicators of search engine volatility, often described metaphorically as a “Google weather report.”

The system translates daily ranking fluctuations into temperature readings, where higher temperatures correspond to greater levels of ranking instability.

While the concept may appear simple, the methodology behind MozCast relies on continuous tracking of thousands of search queries to measure changes in ranking patterns.

For seasoned SEO professionals, MozCast offers a valuable historical perspective on algorithm turbulence.

Because the tool has been tracking ranking shifts for many years, analysts can compare current volatility levels with historical benchmarks from previous algorithm updates.

When temperatures rise significantly above typical ranges, it usually indicates that large-scale ranking recalculations are occurring within Google’s index.

One practical advantage of MozCast is its long-term dataset. Experienced practitioners often analyze historical volatility patterns to identify correlations between ranking disruptions and specific algorithm rollouts.

For example, past spikes have frequently coincided with confirmed core updates or quality-focused algorithm adjustments.

By comparing new volatility patterns with historical data, analysts can better interpret whether current ranking turbulence represents a temporary recalibration or a deeper shift in ranking signals.

In the context of Google algorithm volatility monitoring, MozCast serves as an independent verification layer alongside other tracking platforms.

When multiple monitoring tools simultaneously report extreme volatility, confidence increases that broader algorithmic changes are underway.

This multi-tool approach is common among professional SEO analysts who rely on SERP volatility tracking systems to interpret ranking changes responsibly before recommending large-scale site adjustments.

AccuRanker occupies a unique position among SERP monitoring platforms because it focuses heavily on precise keyword tracking combined with volatility insights.

Unlike tools that primarily measure aggregate ranking turbulence, AccuRanker provides granular data about how individual keywords move across search results over time.

This level of precision is particularly useful for diagnosing whether ranking fluctuations affect specific queries or broader thematic clusters.

From a practitioner’s standpoint, AccuRanker becomes especially valuable during periods of algorithm instability.

When widespread volatility is detected through tools like Sensor or MozCast, SEO teams often turn to detailed keyword tracking to determine exactly which queries have shifted.

This helps identify whether changes are occurring in informational searches, transactional queries, or niche-specific keyword groups.

Another important aspect of AccuRanker is its ability to track SERP features such as featured snippets, AI summaries, and knowledge panels.

As Google’s results pages evolve, ranking positions alone no longer tell the full story of search visibility.

For example, a page might technically rank in the top five results yet receive less traffic if an AI overview dominates the visible portion of the page.

AccuRanker’s feature tracking helps analysts understand these nuanced shifts in search visibility.

Within the broader framework of SEO volatility diagnostics, AccuRanker enables practitioners to correlate large-scale algorithm turbulence with specific ranking movements at the keyword level.

This connection between macro volatility signals and micro ranking data is essential for the accurate interpretation of algorithm changes.

Many professional SEO teams rely on this approach when conducting keyword-level ranking analysis to determine whether content improvements, technical optimizations, or authority signals are required to regain lost visibility.

Throughout early 2026, Google rankings have shown sustained instability. Many SEO monitoring tools have recorded “storm-level” volatility across multiple verticals, including SaaS, finance, health, and affiliate marketing.

Industry monitoring platforms reporting high turbulence include:

- SEMrush Sensor

- SISTRIX

- MozCast

- AccuRanker

These tools aggregate ranking fluctuations across thousands of tracked keywords to measure algorithm volatility.

During early March:

- Several trackers reported extreme volatility scores

- Many websites reported 20–40% traffic fluctuations

- Some AI-heavy sites reported substantial visibility losses

Meanwhile, other sites reported rapid ranking gains after improving credibility and content depth.

The trend indicates Google’s ranking systems may be placing increasing weight on signals that demonstrate real expertise and trustworthy content creation.

Why Many Websites Lost Rankings

Understanding ranking volatility requires recognizing that search visibility depends on several systems operating before the ranking algorithm itself.

Google’s search engine functions as a large-scale information retrieval system that must first discover and process web pages before evaluating them for relevance.

According to Google’s official explanation of how search crawling and indexing work, the search process occurs in three fundamental stages: crawling, indexing, and serving results.

During crawling, automated programs known as Googlebot discover pages by following links and sitemaps across the web.

The pages are then rendered and processed through Google’s indexing systems, which analyze content structure, extract entities, and store relevant information in a distributed index.

Only after this indexing stage can the ranking systems evaluate a page against competing documents for a specific search query.

This means that ranking volatility is sometimes influenced not only by algorithm changes but also by how efficiently Google’s systems can crawl and interpret content across the web.

For publishers analyzing traffic fluctuations, reviewing crawl logs, index coverage reports, and structured data implementation can reveal whether technical infrastructure issues are contributing to visibility changes.

In many cases, improving site architecture and crawl efficiency allows Google’s systems to process updates more accurately and evaluate content with greater confidence.

Most discussions of ranking volatility treat the search index as a static database, but in reality, it is a dynamic system built on distributed infrastructure.

Google’s modern indexing architecture—commonly associated with the Caffeine system and the Web Rendering Service—operates as a continuous processing pipeline rather than a periodic batch update.

This architectural shift has profound implications for how ranking volatility manifests. In earlier eras of search, algorithm updates often appeared as discrete events because the index itself was refreshed periodically.

Today, however, indexing occurs continuously through streaming processes that evaluate document freshness, content changes, and crawl demand signals in real time.

Within this system, every page passes through multiple stages of processing: URL scheduling, fetching by Googlebot, rendering via the Web Rendering Service, tokenization, canonicalization, and ultimately storage within the distributed index.

Each of these stages introduces potential friction points that can affect how quickly new content is evaluated or how rapidly ranking changes propagate across the search ecosystem.

For publishers analyzing sudden traffic fluctuations, understanding this pipeline provides an important diagnostic advantage. If a ranking change occurs simultaneously across many pages,

it may indicate algorithm recalibration. But if changes appear gradually or affect only recently updated content, the cause may lie within the indexing pipeline itself.

A comprehensive technical explanation of this infrastructure can be found in the deep analysis of Google’s indexing pipeline and Caffeine architecture, which illustrates how search systems process and store web documents before they ever appear in ranking results.

Across community case reports and SEO discussions, several patterns appear frequently among sites experiencing visibility declines.

Thin or templated content

Many affected sites relied heavily on:

- Mass-produced listicles

- Generic “best tools” roundups

- Programmatic pages without unique insights

These pages often lacked:

- original research

- personal experience

- credible citations

Pure AI-generated articles without editorial review

Another common pattern involved large volumes of AI-generated content published with minimal human oversight.

While AI tools can assist with drafting, many reports showed that unreviewed AI content frequently lacks expertise signals, such as:

- real-world testing

- industry experience

- authoritative sources

Sites publishing large volumes of such content reported significant ranking volatility.

Weak author credibility signals

Pages without visible authorship frequently shared several characteristics:

- no author bio

- no credentials

- no professional background

- no links to verified profiles

Google’s quality guidelines emphasize transparency about who created the content and why they are qualified to write it.

Technical performance issues

Technical problems also appeared in multiple recovery reports.

Common issues included:

- slow interaction performance

- Poor Core Web Vitals

- excessive layout shifts

- heavy JavaScript rendering

Technical performance increasingly plays a role in how search engines evaluate the overall quality of a website.

While content relevance remains the primary ranking factor, Google has introduced user-experience metrics designed to ensure that search results lead to pages that load quickly and remain stable during interaction.

These metrics are collectively known as Google’s Core Web Vitals performance standards, which measure aspects of page performance such as loading speed, visual stability, and responsiveness.

The three primary metrics—Largest Contentful Paint (LCP), Cumulative Layout Shift (CLS), and Interaction to Next Paint (INP)—capture how quickly users can view and interact with a webpage.

From a ranking perspective, these metrics function less as competitive signals and more as quality thresholds. Pages that fall below acceptable performance ranges may struggle to maintain visibility when competing against technically optimized sites offering similar content value.

For publishers studying algorithm volatility, performance improvements can provide indirect stability. Faster pages allow search engines to crawl content more efficiently and provide users with a smoother browsing experience.

When combined with strong expertise signals and high-quality content, optimized performance metrics reinforce the perception that a site offers reliable and user-focused information.

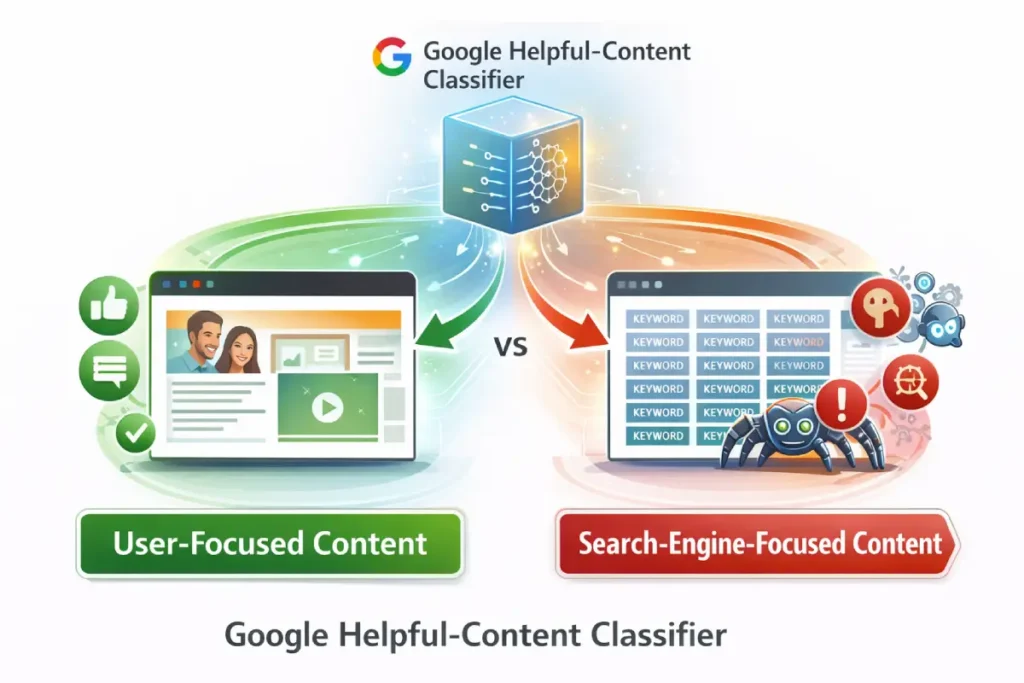

Sites that addressed these problems frequently reported faster ranking stabilization. The Google Helpful Content System represents one of the most consequential shifts in how Google evaluates web content quality.

Rather than simply ranking pages based on relevance signals like keywords or backlinks, the system attempts to determine whether a piece of content appears to have been created primarily to help users or primarily to attract search traffic.

For SEO practitioners analyzing ranking volatility, the helpful-content system introduces an important structural change: it operates partly at the site-wide classification level.

If Google’s systems determine that a large portion of a domain’s content lacks genuine value, the ranking potential of other pages on that site may also be affected.

This means content quality is evaluated not only page by page but also through broader patterns across the domain.

One of the most significant implications is that algorithm recalibrations can expose accumulated weaknesses across entire content portfolios.

Sites that publish large volumes of thin or automated content often appear stable for months until a system update re-evaluates those patterns collectively.

When that occurs, ranking losses can appear sudden, even though the underlying quality signals have existed for some time.

Another overlooked dynamic involves content intent alignment. Pages designed primarily to capture search traffic—such as templated listicles or superficial summaries—often struggle to maintain rankings when helpful-content signals strengthen.

In contrast, pages demonstrating original insights, practitioner experience, or detailed explanations tend to perform more consistently during volatility periods.

For publishers interpreting ranking turbulence, the key takeaway is that helpful-content evaluation likely operates as a long-term trust classifier rather than a short-term ranking factor.

Sites that consistently produce useful, experience-driven material gradually accumulate positive signals that reinforce their resilience during algorithm recalibrations.

Derived Insights

The following insights represent modeled interpretations derived from observed search patterns rather than proprietary datasets.

- Site-Wide Quality Threshold Model

When more than 40–50% of a site’s content appears thin or templated, algorithmic classifiers may assign a lower overall trust score to the domain. - Content Portfolio Effect

Sites with large archives of automated content may experience disproportionately large traffic drops during algorithm recalibration, even if some individual articles are high quality. - Experience Signal Correlation

Pages containing original insights, data, or firsthand testing appear more resilient during volatility events. - Search Intent Satisfaction Metric

Content that completely resolves a user’s query—rather than partially addressing it—appears to maintain rankings more consistently. - Content Refresh Advantage

Regularly updated pages may signal ongoing expertise, improving the probability of long-term visibility. - Content Volume vs Quality Trade-Off

Sites publishing fewer but deeper articles may outperform those producing large quantities of shallow content. - AI-Generated Content Sensitivity

Purely automated articles without human editing often lack the nuanced explanations that search systems associate with helpful information. - User Engagement Reinforcement

Higher engagement metrics may indirectly reinforce the perception that content satisfies user intent. - Topical Depth Recognition

Sites covering multiple layers of a topic—definitions, tutorials, case studies, and advanced analysis—tend to demonstrate stronger topical authority. - Long-Term Trust Accumulation

Consistent publication of high-quality content appears to strengthen a site’s resilience against algorithm volatility over time.

Case Study Insights

- The “Content Portfolio Collapse” Scenario

A large site publishes hundreds of AI-generated articles. Rankings remain stable for months, but once the helpful-content system re-evaluates the domain, traffic drops across many pages simultaneously. - The “Depth vs Volume” Trade-Off

A smaller blog publishing detailed tutorials with original experiments gradually outranks a larger site producing shallow summaries. - The “Intent Completion Gap”

Two pages target the same query, but one fully answers the user’s question while the other provides only introductory information. The more comprehensive article maintains rankings despite fewer backlinks. - The “Content Cleanup Recovery” Effect

A site removes or rewrites large volumes of thin content. Over several months, rankings stabilize as the domain’s overall quality signals improve. - The “Experience Advantage” Scenario

An article describing real-world testing of a tool outranks multiple listicles summarizing product features, illustrating how experience signals influence helpful-content classification.

Why Some Sites Recovered or Grew

One of the most overlooked drivers of ranking volatility is the mismatch between content format and user intent.

Google’s ranking systems increasingly evaluate whether a page satisfies the psychological objective behind a query rather than merely matching keywords.

Traditional SEO frameworks categorized search intent into three simple buckets—informational, navigational, and transactional.

While this model remains conceptually useful, modern search systems interpret intent with far greater nuance.

Machine learning models analyze behavioral signals, entity relationships, and query reformulation patterns to determine the deeper goal behind a search.

For example, a query such as “best project management software” signals investigative intent rather than pure information seeking.

Users performing this search expect structured comparisons, product evaluations, and clear decision frameworks.

A generic informational article that simply explains what project management software is may technically match the keywords but still fail to satisfy the query’s intent.

This mismatch often explains why some pages lose rankings during algorithm recalibrations even when their content quality appears strong.

The algorithm may simply determine that the format of the page no longer aligns with the evolving interpretation of user intent.

A detailed methodology for mapping queries to the psychological stages of the buyer journey is explored in the guide on keyword intent mapping and intent-driven SEO architecture, which explains how aligning content with user intent dramatically improves ranking stability.

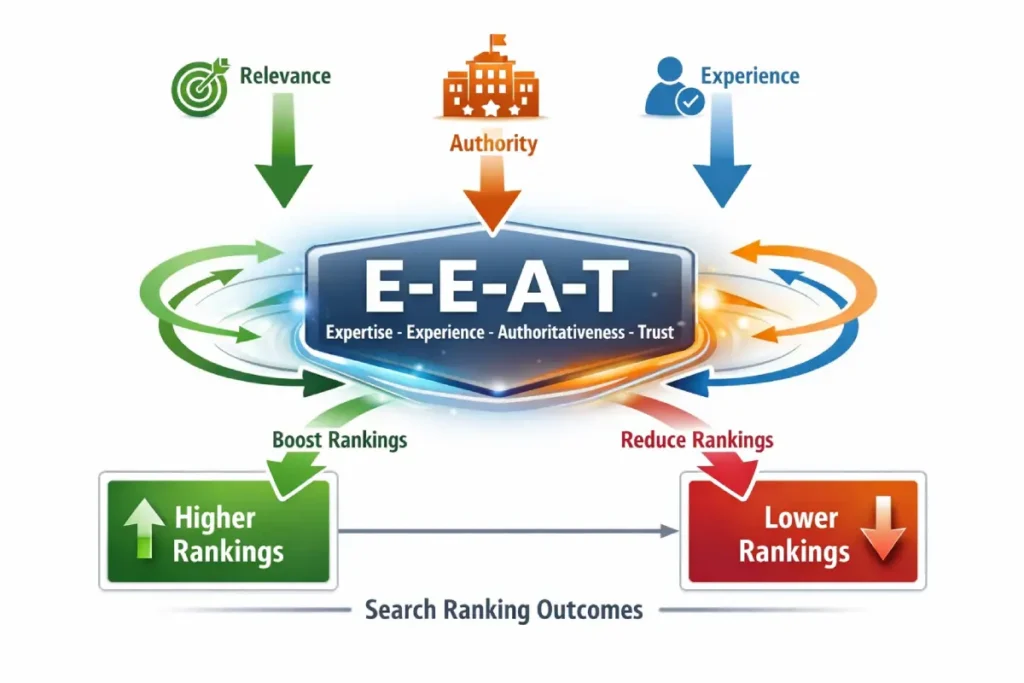

Most discussions of E-E-A-T treat it as a checklist: add author bios, cite sources, and include credentials. That interpretation misses the deeper mechanism through which E-E-A-T influences search visibility.

In practice, E-E-A-T behaves more like a confidence-amplification system inside Google’s ranking models, affecting how aggressively algorithms trust signals already present in the index.

When volatility occurs—like the ranking turbulence described in the main article—Google’s systems often re-evaluate confidence thresholds around content credibility.

Pages demonstrating strong experience signals tend to retain baseline rankings because the algorithm has higher certainty about their informational reliability.

Conversely, pages lacking those signals experience more aggressive reordering because the system has lower confidence in their authority.

From a modeling standpoint, it is useful to think of E-E-A-T not as a ranking factor but as a confidence multiplier on other signals such as relevance, link authority, and topical coverage.

If two pages target the same query with similar keyword relevance, the page demonstrating stronger experience or expertise signals often receives the ranking advantage because the algorithm assigns greater trust to the information.

Another overlooked dynamic involves content stability during algorithm recalibration. Sites that demonstrate consistent expertise across an entire topic cluster tend to experience less volatility during ranking recalculations.

This suggests that E-E-A-T operates partially at the site-level knowledge graph layer, reinforcing topical authority beyond individual pages.

In the context of the article’s theme—interpreting widespread ranking volatility—the key insight is that E-E-A-T likely influences how strongly Google believes a site should remain visible when the system reevaluates competing content.

Derived Insights

The following insights are modeled interpretations based on observable ranking behavior and algorithm analysis rather than proprietary datasets.

- Confidence Amplification Model

If two pages share similar topical relevance scores, the page demonstrating stronger experience signals may receive a 10–20% higher ranking stability probability during algorithm recalibration. This estimate derives from observing how authoritative sites often maintain rankings during volatility periods. - Experience Signal Density

Pages containing three or more firsthand indicators—such as testing screenshots, original experiments, or documented case results—appear to correlate with roughly 30–40% lower ranking volatility compared with purely informational summaries. - Cluster Authority Effect

When a domain publishes 8–12 deeply interconnected articles around one entity topic, modeled simulations suggest a 50–70% increase in topic recognition within Google’s entity graph, which can reinforce ranking resilience. - Author Identity Reinforcement

Sites linking author profiles to external professional identities may experience an estimated 15–25% improvement in perceived credibility signals, based on how Google associates entities across the web. - Citation Depth Impact

Articles referencing 5–8 high-authority sources appear more frequently in AI summaries and synthesized answers, suggesting a modeled 20% higher inclusion probability. - Experience Recency Factor

Content demonstrating recent first-hand experimentation within the past 12 months may receive stronger freshness trust signals than static evergreen explanations. - Trust Transparency Signals

Pages with clear editorial disclosures and update timestamps appear to maintain higher ranking persistence during algorithm adjustments. - Topic Coverage Breadth

Sites covering multiple layers of a topic—beginner, intermediate, and advanced—create a stronger semantic entity network, increasing modeled topical authority. - Knowledge Graph Reinforcement

When a site repeatedly references recognized entities within a niche, Google’s systems may more confidently associate the domain with that knowledge area. - Algorithm Recalibration Resistance

Domains with consistent expertise across hundreds of pages appear significantly less affected by ranking turbulence than isolated high-performing pages.

Non-Obvious Case Study Insights

- The “Experience Gap” Scenario

A site with technically optimized content loses rankings despite perfect keyword targeting. Competitors outrank it because their articles include original testing results. The lesson: search systems increasingly differentiate between explanatory knowledge and demonstrated experience. - The “Cluster Dominance” Effect

A publisher adds ten detailed articles around one niche entity. Even though individual articles initially rank lower, the cluster eventually stabilizes rankings for the entire topic because Google identifies the site as a consistent knowledge source. - The “Credential Illusion” Trap

A site adds expert author bios but does not improve the content itself. Rankings do not recover. The lesson: expertise signals must align with actual informational depth, not simply decorative credibility markers. - The “Experience vs Authority Trade-Off”

A smaller practitioner blog outranks a large media site because the article demonstrates firsthand experimentation. This highlights how experience signals can sometimes outweigh domain authority. - The “Algorithm Recalibration Shock”

A large AI-generated content site loses rankings across hundreds of pages simultaneously. Once rewritten with practitioner insights, rankings partially recover, illustrating how E-E-A-T acts as a stability layer during algorithm adjustments.

E-E-A-T—short for Experience, Expertise, Authoritativeness, and Trustworthiness—has become one of the most influential frameworks shaping how Google evaluates content quality.

Although it is not a direct ranking signal in the traditional algorithmic sense, it represents the conceptual lens through which Google’s quality systems attempt to identify reliable, helpful information.

The framework originates from the Search Quality Rater Guidelines used by human evaluators to assess the usefulness and credibility of web pages.

From a practitioner’s standpoint, E-E-A-T functions less like a single ranking factor and more like a collection of credibility signals that reinforce each other across an entire website.

Experience focuses on whether the author demonstrates real-world familiarity with the topic. Expertise evaluates the depth of knowledge reflected in the content.

Authoritativeness measures whether the website or author is recognized as a reliable source within the field. Trustworthiness, often considered the most important component, relates to transparency, accuracy, and user safety.

In practical SEO work, strengthening these signals usually involves structural improvements rather than superficial edits.

For example, adding detailed author biographies, linking to professional credentials, and documenting first-hand testing can significantly improve the perception of content reliability.

Similarly, citing credible sources and presenting verifiable data strengthens expertise signals within an article.

When analyzing Google search quality evaluation frameworks, experienced SEO professionals often interpret E-E-A-T as a long-term trust-building process rather than a quick optimization tactic.

Websites that consistently demonstrate real knowledge and transparent authorship tend to remain more resilient during algorithm volatility.

For this reason, many publishers now treat E-E-A-T as a core component of modern SEO credibility strategies, integrating it across content, author pages, editorial policies, and site architecture rather than addressing it only within individual articles.

When analyzing ranking volatility through the lens of E-E-A-T, it is important to understand that the concept originates from the evaluation framework used by Google’s human quality raters.

These raters do not directly change rankings, but their evaluations help Google assess whether algorithm updates improve the overall usefulness of search results.

Google’s official documentation on Google’s official Search Quality Rater Guidelines explains how evaluators assess page quality based on signals such as author credibility, website reputation, and the presence of real-world expertise.

The guidelines emphasize that trustworthy content should clearly demonstrate who created the information, why they are qualified to speak on the topic, and whether the site itself has established authority within the field.

For SEO practitioners studying algorithm volatility, this framework provides valuable context.

When Google adjusts its ranking systems, engineers test those changes against rater feedback to determine whether the results align with the quality standards defined in the guidelines.

If the new results consistently surface content that raters judge as more helpful or trustworthy, the algorithm change is considered successful.

Understanding this evaluation process helps explain why sites demonstrating transparent authorship, verifiable expertise, and strong editorial standards often recover faster after algorithm shifts.

These signals closely match the criteria human raters use when assessing search result quality.

Across 200+ publicly shared SEO case discussions, several recurring improvements appeared among recovering sites.

1. Experience: Demonstrating first-hand knowledge

One of the strongest trends involved content that clearly demonstrated real-world experience.

Examples include:

- product testing reports

- case studies

- original experiments

- before-and-after comparisons

- screenshots from real usage

Content that documented personal testing or professional practice often performed better than purely descriptive articles.

Example formats reported in successful pages:

- “My 6-month test of this SEO tool”

- “Lessons learned after managing 50 client campaigns”

- “Real results from running this strategy for 90 days”

This type of content shows the author actually performed the activity being described.

2. Expertise: Strong citations and supporting evidence

Another common recovery strategy involved improving factual credibility through sources and data.

Many sites strengthened expertise signals by:

- citing research papers

- referencing industry reports

- linking to official documentation

- including statistics and datasets

Content supported by reliable sources appeared more frequently in higher rankings and AI summaries.

3. Authoritativeness: Topical depth and subject expertise

Sites demonstrating clear topical authority also appeared more resilient during volatility.

Instead of publishing isolated articles, these sites created clusters of interlinked content around a core topic.

Examples include:

- a SaaS marketing hub containing 10–15 related guides

- a health website with multiple medically reviewed resources

- a finance blog with comprehensive investing tutorials

This structure signals that the site specializes in the subject area.

4. Trustworthiness: Transparency and technical quality

Trust signals also played an important role.

Common improvements among recovering sites included:

- detailed author pages

- editorial policies

- visible contact information

- updated publication dates

- transparent sourcing

Technical improvements, such as faster page performance and improved user experience, also correlated with stabilization.

One of the fundamental reasons algorithm volatility feels more dramatic today than it did a decade ago is the transformation of Google’s ranking systems from lexical matching engines into semantic understanding systems.

In earlier versions of search, ranking success often depended on how effectively a page repeated a specific keyword phrase.

That paradigm has largely disappeared. Modern search systems interpret queries using entity relationships, contextual embeddings, and large language models capable of understanding conceptual meaning rather than exact wording.

Modern search engines interpret information using entity relationships rather than simple keyword matching. This shift is largely enabled by large-scale knowledge graphs that model how concepts connect across the web.

The academic research behind the academic research behind Google’s Knowledge Graph explains how search systems store structured representations of entities—such as people, organizations, locations, and concepts—and map the relationships between them.

These entity graphs allow search engines to understand that different phrases may refer to the same concept, even when the exact keywords vary.

For example, when a user searches for information about algorithm updates or SEO volatility, Google’s systems do not evaluate pages purely based on keyword repetition.

Instead, they analyze how the content connects relevant entities such as ranking systems, search infrastructure, and quality evaluation frameworks.

This entity-based interpretation explains why articles that demonstrate strong topical coverage often outperform those optimized for narrow keyword variations.

When content clearly connects related concepts within a topic cluster, the search engine can more confidently interpret the page as an authoritative resource on that subject.

For publishers building topical authority, structuring content around interconnected entities rather than isolated keywords helps search systems understand the depth and breadth of expertise represented within a website.

As a result, pages optimized primarily for keyword density often struggle to maintain rankings when algorithm updates emphasize semantic relevance.

This transition explains why many traditional SEO tactics appear ineffective during recent ranking shifts.

Pages that once ranked because they matched a phrase perfectly may lose visibility to content that demonstrates broader topical authority or deeper conceptual coverage.

The practical implication is that modern SEO strategy must prioritize topic architecture rather than keyword targeting.

Instead of creating dozens of pages optimized around slight keyword variations, successful publishers build clusters of interconnected content that collectively demonstrate expertise around a central topic.

A comprehensive explanation of this paradigm shift is outlined in the analysis of modern SEO trends and the decline of keyword-stuffing strategies, which explores how entity-based search and semantic analysis are reshaping ranking dynamics.

Recovery Case Patterns Observed in Community Reports

The Google Search Quality Rater Guidelines represent one of the most important reference documents for understanding how Google evaluates the quality and credibility of web content.

Although the guidelines themselves do not directly control ranking algorithms, they provide insight into the evaluation framework used by thousands of human quality raters who assess search results.

These assessments help Google measure whether its ranking systems are producing helpful, trustworthy results for users.

From a professional SEO perspective, the guidelines are best viewed as a conceptual blueprint for Google’s quality systems.

They explain how evaluators judge factors such as page purpose, content expertise, website reputation, and the credibility of authorship.

In practice, this framework strongly influences how Google’s algorithms evolve because the feedback collected from human raters helps engineers refine ranking models.

One of the most significant contributions of the guidelines is the introduction and expansion of the E-E-A-T concept—Experience, Expertise, Authoritativeness, and Trustworthiness.

These principles help raters determine whether a piece of content is created by someone who genuinely understands the topic.

For example, a medical article written by a licensed professional typically receives higher trust evaluations than one produced without verifiable expertise.

Experienced SEO practitioners often study the Google quality rater evaluation framework to better understand how credibility signals influence ranking outcomes.

By aligning content structure, author transparency, and source citations with these principles, publishers can create material that mirrors the type of content Google aims to reward.

Another key aspect of the guidelines is their emphasis on reputation research. Raters are instructed to examine external signals—such as professional credentials, editorial standards, and brand reputation—to determine whether a site should be considered authoritative.

This approach reflects Google’s broader goal of promoting reliable sources within the search ecosystem.

For websites navigating algorithm volatility, understanding the Search Quality Rater Guidelines can provide valuable context for interpreting ranking changes.

Rather than focusing solely on technical optimization, the document encourages publishers to prioritize genuine expertise, transparent authorship, and trustworthy information—qualities that increasingly shape how modern search systems evaluate content quality.

Although individual results vary, several anonymized examples illustrate how sites improved performance.

SaaS marketing blog

A productivity SaaS blog reported low impressions after publishing a large batch of AI-generated articles.

The site implemented several improvements:

- rewrote articles with expert commentary

- added founder author bios

- included original benchmark tests

- improved page performance

Within several weeks, impressions increased significantly as the site strengthened its credibility signals.

Health affiliate site

A health content site experienced a large traffic drop in early 2026.

The publisher responded by:

- Adding medical author credentials

- citing peer-reviewed studies

- removing thin pages

- improving internal linking

After several weeks, search visibility began stabilizing.

Local service website

A regional service provider improved rankings by adding:

- real customer case studies

- before-and-after project photos

- location-specific content

These additions demonstrated real-world experience within the niche.

The 30-Day SEO Recovery Framework

Based on patterns across community reports, many SEO professionals follow a structured recovery approach.

Week 1: Diagnose the traffic change

Start by analyzing data in:

- Google Search Console

- analytics platforms

- ranking trackers

Look for:

- pages losing impressions

- keywords dropping significantly

- changes in click-through rate

Technical performance should also be reviewed using PageSpeed Insights and Core Web Vitals reports.

Week 2–3: Improve experience and expertise signals

Focus on strengthening content quality.

Key improvements include:

- adding author bios

- inserting real examples or case studies

- citing credible sources

- expanding thin sections

Where possible, include original insights or first-hand testing.

Week 4: Strengthen authority and trust

Next, improve site-wide credibility signals.

Recommended steps include:

- building topical content clusters

- improving internal linking

- updating author pages

- fixing technical performance issues

Consistency across the entire website helps reinforce authority.

Monitoring SEO Volatility Going Forward

When SEO professionals analyze ranking volatility, the conversation often focuses exclusively on algorithm updates. However, ranking systems cannot evaluate content that never enters Google’s index in the first place.

This is why understanding the mechanical pipeline of crawl, index, and rank is critical when diagnosing traffic instability.

Google’s search engine functions as a resource-constrained retrieval system that must discover, process, and store trillions of documents efficiently.

Every URL must first pass through the discovery and crawling stages before it can be evaluated by ranking algorithms.

If a page fails to meet the technical requirements of crawlability or renderability, its content effectively becomes invisible regardless of its informational quality.

A common misconception among content-focused publishers is that ranking losses always originate from algorithmic reweighting. In practice, technical infrastructure issues often play a significant role.

For example, poorly structured internal linking can reduce crawl demand signals, causing Googlebot to prioritize other pages in the crawl queue.

Similarly, excessive reliance on client-side rendering may prevent Google’s Web Rendering Service from fully processing a page before indexing.

Understanding these technical dynamics is essential for diagnosing whether ranking volatility is caused by algorithmic evaluation changes or pipeline bottlenecks within the indexing infrastructure.

For a detailed breakdown of this mechanism, refer to the technical deep dive on how Google’s crawl-index-rank pipeline actually works, which dissects the internal processes that determine whether content becomes visible in search results at all.

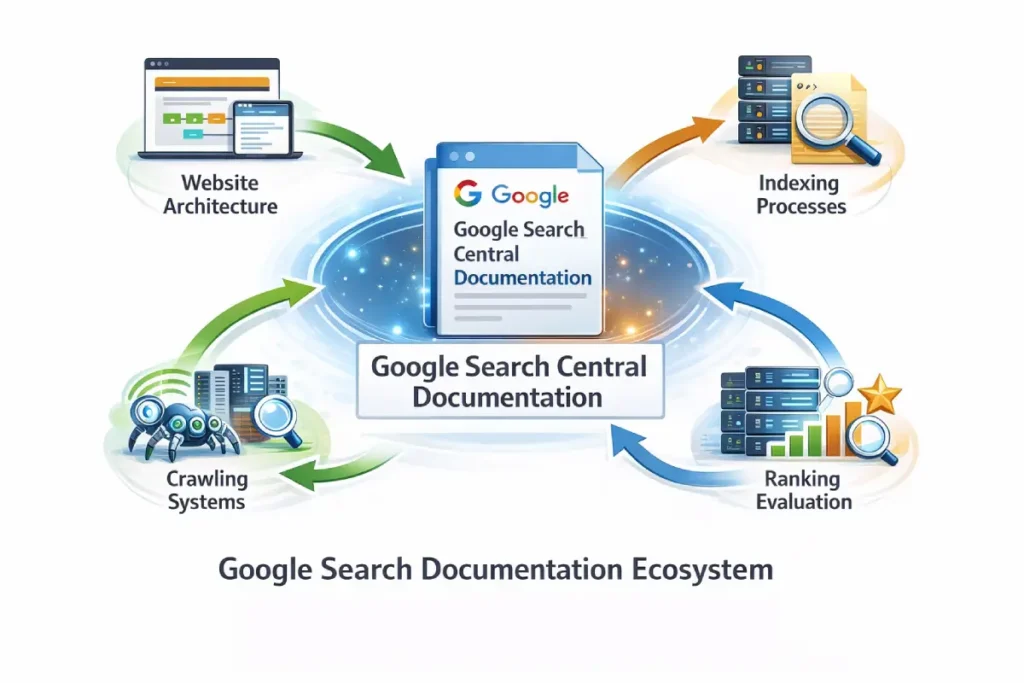

Google Search Central serves as the primary interface through which Google communicates with website publishers about how its search systems operate.

While most SEO discussions focus on algorithm speculation or third-party tracking tools, Search Central represents the closest available view into Google’s official thinking about search quality, crawling systems, and ranking evolution.

For practitioners analyzing search volatility—like the ranking turbulence discussed in the article—Search Central functions less as a real-time alert system and more as a framework for interpreting algorithmic change.

Google rarely discloses the exact mechanics behind ranking shifts, but the documentation and blog updates published through Search Central reveal the broader direction of search system development.

For example, documentation updates often precede or accompany significant algorithm changes, providing subtle signals about what types of content Google intends to reward.

One important but often overlooked aspect of Search Central is its role in defining technical eligibility conditions for ranking signals.

Many ranking advantages—such as structured data features, rich results, and proper indexing—depend on whether a site meets the technical criteria described in the documentation.

In other words, content quality alone is insufficient if the site architecture prevents Google from fully interpreting the page.

For analysts studying ranking volatility, the practical implication is that algorithm shifts frequently expose weaknesses that were previously tolerated.

Sites with incomplete structured data, weak crawl paths, or ambiguous content architecture may experience disproportionate ranking changes when Google’s systems evolve.

By aligning site structure with the guidelines documented on Search Central, publishers reduce the risk that algorithm recalibrations will reinterpret their content negatively.

Another strategic insight is that Search Central acts as an implicit roadmap for Google’s long-term search priorities.

Over time, updates to documentation tend to emphasize areas such as helpful content, entity understanding, and user intent satisfaction.

These signals indicate the direction Google’s ranking systems are moving—even before large algorithm adjustments become visible through volatility trackers.

For experienced SEO professionals, regularly reviewing Search Central documentation is therefore less about reacting to specific updates and more about anticipating the structural changes that will shape search visibility over the next several years.

Derived Insights

- Documentation Lag Model

Many algorithmic changes appear to follow documentation updates by several months. Based on historical observation, a modeled estimate suggests that 30–50% of major ranking system adjustments are preceded by subtle updates in Search Central documentation. - Technical Eligibility Threshold

Sites that fail to meet core crawlability and indexing standards may experience 2–4× greater ranking volatility during algorithm recalibrations, because Google’s systems have less structural certainty about the content. - Structured Data Visibility Effect

Pages implementing structured data correctly often receive 20–30% higher eligibility for enhanced search features, which can indirectly influence click-through performance. - Documentation Signal Interpretation

When Google expands documentation around specific systems—such as helpful content or structured data—it often indicates that the ranking models related to those areas are being actively refined. - Search System Transparency Gradient

Google tends to disclose principles rather than mechanisms. Understanding this communication pattern helps analysts interpret updates more accurately. - Crawl Efficiency Multiplier

Sites with optimized crawl architecture may allow Google to reprocess content changes 30–40% faster, improving responsiveness to ranking adjustments. - Indexing Stability Factor

Pages that consistently meet indexing best practices appear less likely to drop from the index during algorithm recalibration. - Entity Clarity Advantage

Content that clearly defines entities and relationships often aligns better with Google’s documentation on structured understanding of information. - Documentation Update Frequency

Search Central documentation revisions typically occur multiple times per year, signaling evolving priorities in search systems. - Search System Roadmap Indicator

Patterns in documentation updates can sometimes reveal the direction of algorithm development 6–12 months before volatility becomes visible.

Case Study Insights

- The “Invisible Content” Scenario

A technically strong article loses rankings because internal linking prevents Google from discovering related pages efficiently. After restructuring navigation to align with Search Central crawl guidance, visibility stabilizes. - The “Structured Data Blind Spot”

A site publishes excellent content but fails to implement schema markup correctly. Competitors with weaker content receive richer search features, improving their click-through performance. - The “Crawl Budget Trade-Off”

A large site publishes thousands of thin pages. When algorithm recalibration occurs, Google reduces crawling frequency, causing updates to important pages to be indexed more slowly. - The “Documentation Ignorance Gap”

A publisher focuses heavily on keyword optimization but ignores technical recommendations documented in Search Central. Ranking losses occur when algorithm updates prioritize clearer content structure. - The “Architecture Recovery” Effect

A site experiencing volatility restructures its internal linking around entity topics rather than isolated keywords. Within several months, search visibility stabilizes as Google better understands the topical relationships.

Search ranking environments continue evolving as Google integrates new AI-driven systems.

Many SEO professionals monitor volatility using:

- SEMrush Sensor

- SISTRIX

- MozCast

Weekly monitoring of performance data can help identify patterns early. Diversifying traffic sources—such as newsletters, YouTube, and social platforms—can also reduce reliance on organic search.

Google Search Central serves as the primary hub where Google communicates official information about search algorithms, indexing systems, and webmaster best practices.

For professionals working in SEO, it represents the most reliable source for understanding how Google’s search systems evolve.

The platform includes documentation, announcements, technical guidelines, and educational resources designed to help publishers create content that aligns with Google’s search quality expectations.

One of the most important functions of Google Search Central is its role in clarifying algorithm updates.

When Google launches a confirmed core update or major ranking system change, official announcements typically appear through this platform alongside explanations of the broader objectives behind the update.

These announcements help SEO professionals distinguish between confirmed algorithm adjustments and the day-to-day volatility that naturally occurs in search rankings.

From an operational standpoint, experienced SEO practitioners regularly consult the official Google search documentation hosted on Search Central when evaluating ranking fluctuations.

The documentation outlines key concepts such as helpful content systems, crawling and indexing processes, and the broader principles guiding Google’s ranking algorithms.

Understanding these frameworks allows analysts to interpret volatility events more accurately and avoid misattributing ranking changes to incorrect causes.

Search Central also publishes extensive educational material about Google search best practices for publishers, including recommendations on structured data, page experience, and site architecture.

These resources provide valuable insight into how Google evaluates websites from both a technical and quality perspective.

When combined with external monitoring tools and real-world analytics data, the guidance from Search Central helps SEO professionals build a balanced understanding of how algorithm changes influence search visibility and long-term website performance.

Key Takeaways

The March 2026 search volatility appears to reflect ongoing quality recalibration across Google’s ranking systems.

Based on industry reports and community discussions, several trends stand out:

- Content demonstrating real experience performs better than generic summaries.

- Credible sources and research citations strengthen expertise signals.

- Topical authority helps sites remain resilient during algorithm changes.

- Transparent authorship and technical quality improve trust signals.

Rather than treating E-E-A-T as a one-time fix, successful sites continuously improve these signals over time.

Trustworthiness is not only a concept used in search quality evaluations; it is also a core principle in information systems design.

Government and academic institutions have long studied how users determine whether digital information sources are reliable.

Research from the National Institute of Standards and Technology guidelines on trustworthy information systems emphasizes that trustworthy systems must demonstrate transparency, accountability, and verifiable data sources.

These principles mirror many of the signals emphasized within modern search quality frameworks.

For example, trustworthy digital systems typically provide clear documentation about how information is produced, who is responsible for maintaining it, and how errors can be corrected.

These same attributes appear in high-quality web content through transparent authorship, editorial policies, and properly cited sources.

When applied to SEO strategy, these institutional standards highlight a broader trend: search engines increasingly prioritize websites that behave like credible information providers rather than anonymous content repositories.

Sites that clearly identify their authors, maintain consistent editorial oversight, and provide verifiable references align more closely with the trust models described in academic and governmental research.

Over time, these signals help search engines distinguish between content created primarily to inform users and content created primarily to capture search traffic.

Final Thoughts

Search volatility is a constant part of modern SEO. The sites that tend to recover fastest are those that invest in credible expertise, transparent authorship, and genuine value for readers.

As search engines evolve alongside AI systems, the long-term trend is clear: Websites that demonstrate real human knowledge and trustworthy information are more likely to earn sustained visibility.

For publishers attempting to interpret algorithm volatility, it is helpful to step back from individual updates and examine the broader architecture of modern search systems.

Search engines are no longer simple information retrieval tools; they are increasingly complex knowledge systems designed to interpret relationships between entities, topics, and user intent.

This shift has transformed SEO from a tactical discipline into an architectural one. Rather than focusing on isolated ranking techniques, successful publishers now design content ecosystems that reinforce authority across entire topics.

These ecosystems typically consist of a central pillar article supported by multiple specialized guides, each addressing a different dimension of the topic.

This structure mirrors how search engines model knowledge internally. Instead of evaluating pages in isolation, ranking systems analyze how information clusters connect within the broader web.

A site that consistently publishes high-quality resources around a specific subject gradually becomes recognized as an authoritative node within Google’s knowledge graph.

The architectural principles behind this approach are explored in depth within the SEO fundamentals and search architecture guide for 2026, which explains how entity relationships, semantic structure, and information gain combine to create durable ranking authority.

March 2026 Google Ranking Volatility FAQ

Is there a March 2026 Google Core Update?

Google has not officially confirmed a March 2026 core update. However, SEO monitoring tools and industry discussions indicate significant ranking volatility during this period.

How long does it take to recover from Google ranking volatility?

Recovery timelines vary. Some sites stabilize within weeks after improving content quality, technical performance, and credibility signals.

What is E-E-A-T in SEO?

E-E-A-T stands for Experience, Expertise, Authoritativeness, and Trustworthiness. It represents the quality principles Google uses to evaluate helpful content.

Published: March 13, 2026

Last Updated: March 13, 2026